A new “image analysis pipeline” is giving scientists rapid new insight into how disease or injury have changed the body, down to the individual cell.

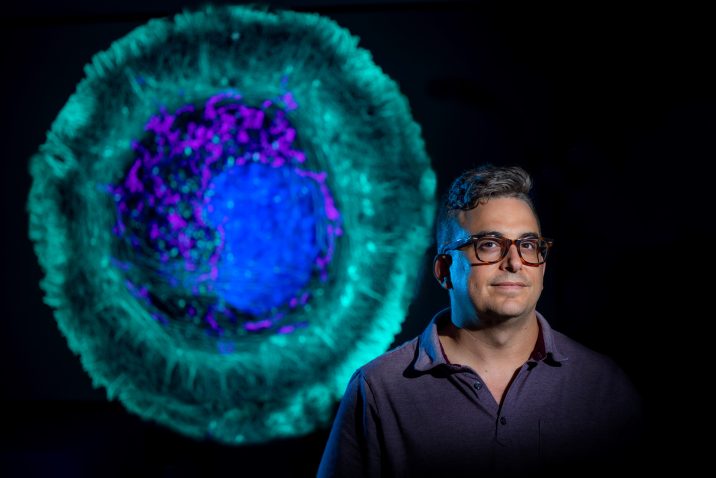

It’s called TDAExplore, which takes the detailed imaging provided by microscopy and pairs it with a hot area of mathematics called topology, which provides insight on how things are arranged, and the analytical power of artificial intelligence to give, for example, a new perspective on changes in a cell resulting from ALS and where in the cell they happen, says Dr. Eric Vitriol, cell biologist and neuroscientist at the Medical College of Georgia.

It is an “accessible, powerful option” for using a personal computer to generate quantitative — measurable and consequently objective — information from microscopic images that likely could be applied as well to other standard imaging techniques like X-rays and PET scans, they report in the journal Patterns.

“We think this is exciting progress into using computers to give us new information about how image sets are different from each other,” Vitriol says. “What are the actual biological changes that are happening, including ones that I might not be able to see, because they are too minute, or because I have some kind of bias about where I should be looking.”

At least in the analyzing data department, computers have our brains beat, the neuroscientist says, not just in their objectivity but in the amount of data they can assess. Computer vision, which enables computers to pull information from digital images, is a type of machine learning that has been around for decades, so he and his colleague and fellow corresponding author Dr. Peter Bubenik, a mathematician at the University of Florida and an expert on topological data analysis, decided to partner the detail of microscopy with the science of topology and the analytical might of AI. Topology and Bubenik were key, Vitriol says.

Topology is “perfect” for image analysis because images consist of patterns, of objects arranged in space, he says, and topological data analysis (the TDA in TDAExplore) helps the computer also recognize the lay of the land, in this case where actin — a protein and essential building block of the fibers, or filaments, that help give cells shape and movement — has moved or changed density. It’s an efficient system, that instead of taking literally hundreds of images to train the computer how to recognize and classify them, it can learn on 20 to 25 images.

Part of the magic is the computer is now learning the images in pieces they call patches. Breaking microscopy images down into these pieces enables more accurate classification, less training of the computer on what “normal” looks like, and ultimately the extraction of meaningful data, they write.

No doubt microscopy, which enables close examination of things not visible to the human eye, produces beautiful, detailed images and dynamic video that are a mainstay for many scientists. “You can’t have a college of medicine without sophisticated microscopy facilities,” he says.

But to first understand what is normal and what happens in disease states, Vitriol needs detailed analysis of the images, like the number of filaments; where the filaments are in the cells — close to the edge, the center, scattered throughout — and whether some cell regions have more.

The patterns that emerge in this case tell him where actin is and how it’s organized — a major factor in its function — and where, how and if it has changed with disease or damage.

As he looks at the clustering of actin around the edges of a central nervous system cell, for example, the assemblage tells him the cell is spreading out, moving about and sending out projections that become its leading edge. In this case, the cell, which has been essentially dormant in a dish, can spread out and stretch its legs.

Some of the problem with scientists analyzing the images directly and calculating what they see include that it’s time consuming and the reality that even scientists have biases.

As an example, and particularly with so much action happening, their eyes may land on the familiar, in Vitriol’s case, that actin at the leading edge of a cell. As he looks again at the dark frame around the cell’s periphery clearly indicating the actin clustering there, it might imply that is the major point of action.

“How do I know that when I decide what’s different that it’s the most different thing or is that just what I wanted to see?” he says. “We want to bring computer objectivity to it and we want to bring a higher degree of pattern recognition into the analysis of images.”

AI is known to be able to “classify” things, like recognizing a dog or a cat every time, even if the picture is fuzzy, by first learning many millions of variables associated with each animal until it knows a dog when it sees one, but it can’t report why it’s a dog. That approach, which requires so many images for training purposes and still doesn’t provide many image statistics, does not really work for his purposes, which is why he and his colleagues made a new classifier that was restricted to topological data analysis.

The bottom line is that the unique coupling used in TDAExplore efficiently and objectively tells the scientists where and how much the perturbed cell image differs from the training, or normal, image, information which also provides new ideas and research directions, he says.

Back to the cell image that shows the actin clustering along its perimeter, while the “leading edge” was clearly different with perturbations, TDAExplore showed that some of the biggest changes actually were inside the cell.

“A lot of my job is trying to find patterns in images that are hard to see,” Vitriol says, “Because I need to identify those patterns so I can find some way to get numbers out of those images.” His bottom lines include figuring out how the actin cytoskeleton, which the filaments provide the scaffolding for and which in turn provides support for neurons, works and what goes wrong in conditions like ALS.

Some of those machine learning models that require hundreds of images to train and classify images don’t describe which part of the image contributed to the classification, the investigators write. Such huge amounts of data that need analyzing and might include like 20 million variables, require a super computer. The new system instead needs comparatively few high-resolution images and characterizes the “patches” that led to the selected classification. In a handful of minutes, the scientist’s standard personal computer can complete the new image analysis pipeline.

The unique approach used in TDAExplore objectively tells the scientists where and how much the perturbed image differs from the training image, information which also provides new ideas and research directions, he says.

The ability to get more and better information from images ultimately means that information generated by basic scientists like Vitriol, which often ultimately changes what is considered the facts of a disease and how it’s treated, is more accurate. That might include being able to recognize changes, like those the new system pointed out inside the cell, that have been previously overlooked.

Currently scientists apply stains to enable better contrast then use software to pull out information about what they are seeing in the images, like how the actin is organized into bigger structure, he says.

“We had to come up with a new way to get relevant data from images and that is what this paper is about.”

The published study provides all the pieces for other scientists to use TDAExplore.

The research was supported by the National Institutes of Health.

Read the full study.

Augusta University

Augusta University